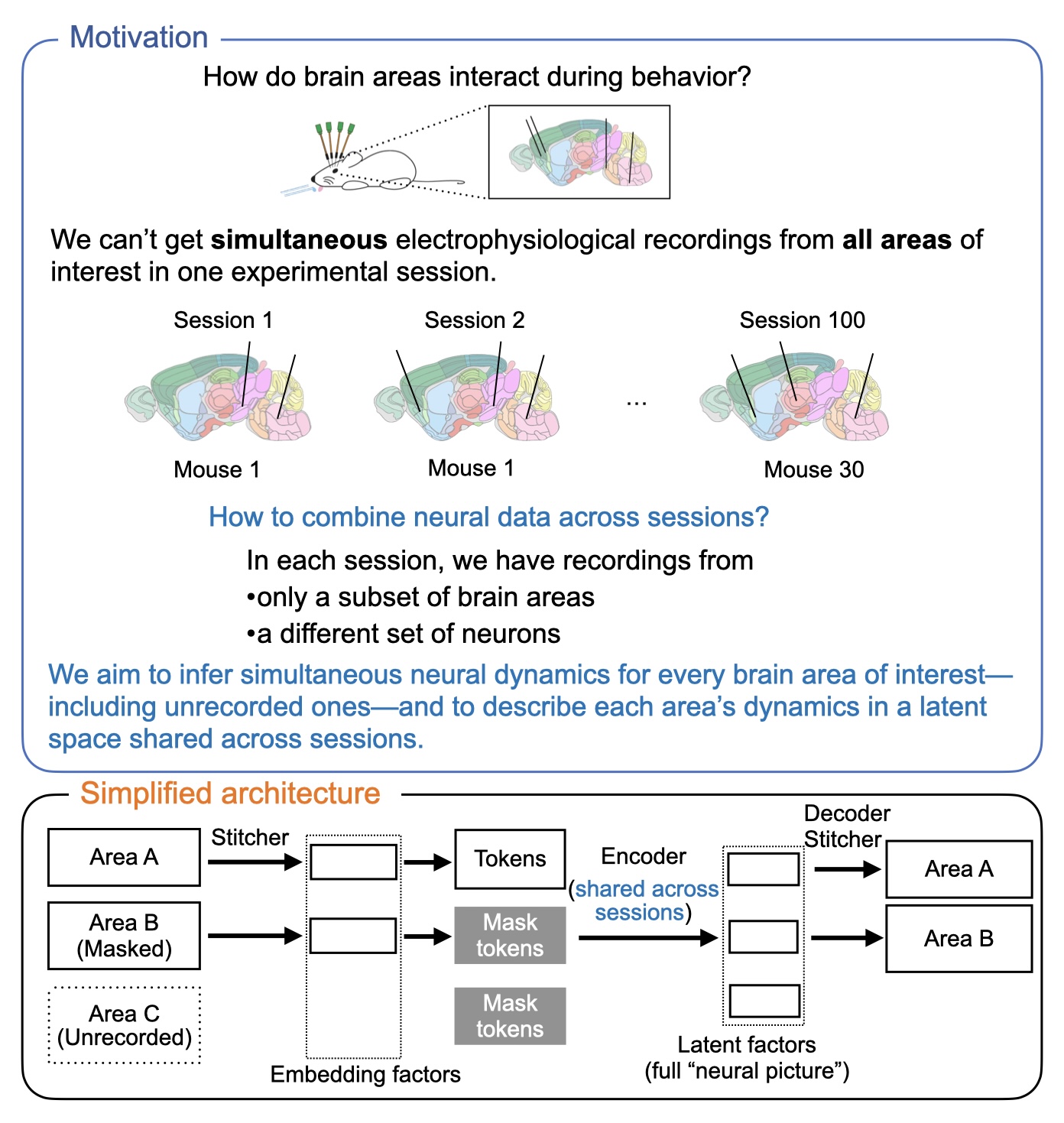

Inpainting the Neural Picture: Inferring Unrecorded Brain Area Dynamics from Multi-Animal Datasets

Understanding how distributed brain circuits drive an animal’s behavior requires recordings from interconnected cortical and subcortical areas. While Neuropixels enable simultaneous recordings from multiple areas, no single experiment covers all areas of interest, and, in a given recorded area, different neurons are recorded across sessions and animals. This poses a central challenge: how can we integrate multi-animal datasets to study interactions across brain areas that are not recorded simultaneously in any single session? We introduce NeuroPaint, a masked autoencoding approach for inferring the dynamics of unrecorded brain areas. By training across animals with overlapping subsets of recorded regions, NeuroPaint learns to reconstruct neural dynamics in unrecorded areas by leveraging shared structure across individuals.

MAP Dataset | IBL Dataset | Code

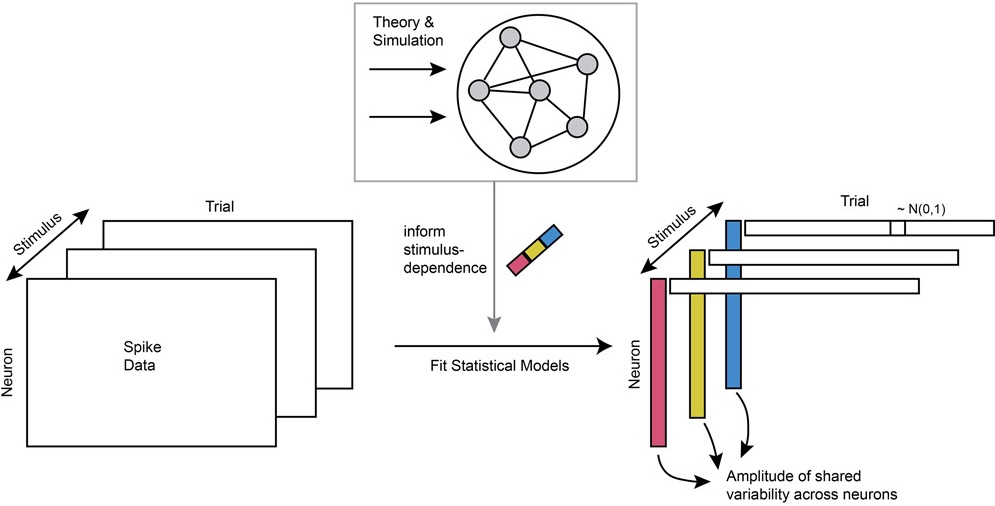

Stimulus-dependent communication within and between visual areas

In collaboration with Dr. Adam Kohn’s lab at Albert Einstein College of Medicine, I analyzed simultaneously recorded spike data from monkey primary visual cortex and secondary visual cortex from Utah arrays, tetrodes, and Neuropixels probes. Previous work showed that trial-to-trial variability is not only shared within each visual area, but also shared between visual areas, which is often interpreted as communication between areas. However, how this communication depends on stimuli had remained unexplored. I addressed this gap by developing statistical models to capture the neural data simultaneously recorded from two visual areas. Guided by the circuit mechanism underlying shared variability, I designed novel statistical models that concisely describe the stimulus-dependence of within-area and inter-areal

shared variability. This work characterized the stimulus-dependent "communication" (shared variability) within-area and across area with a parsimonious stastiscal model (generalized affine model) and provided a simple circuit dynamics explanation for this dependence.

Dataset 1 | Dataset 2 | Code

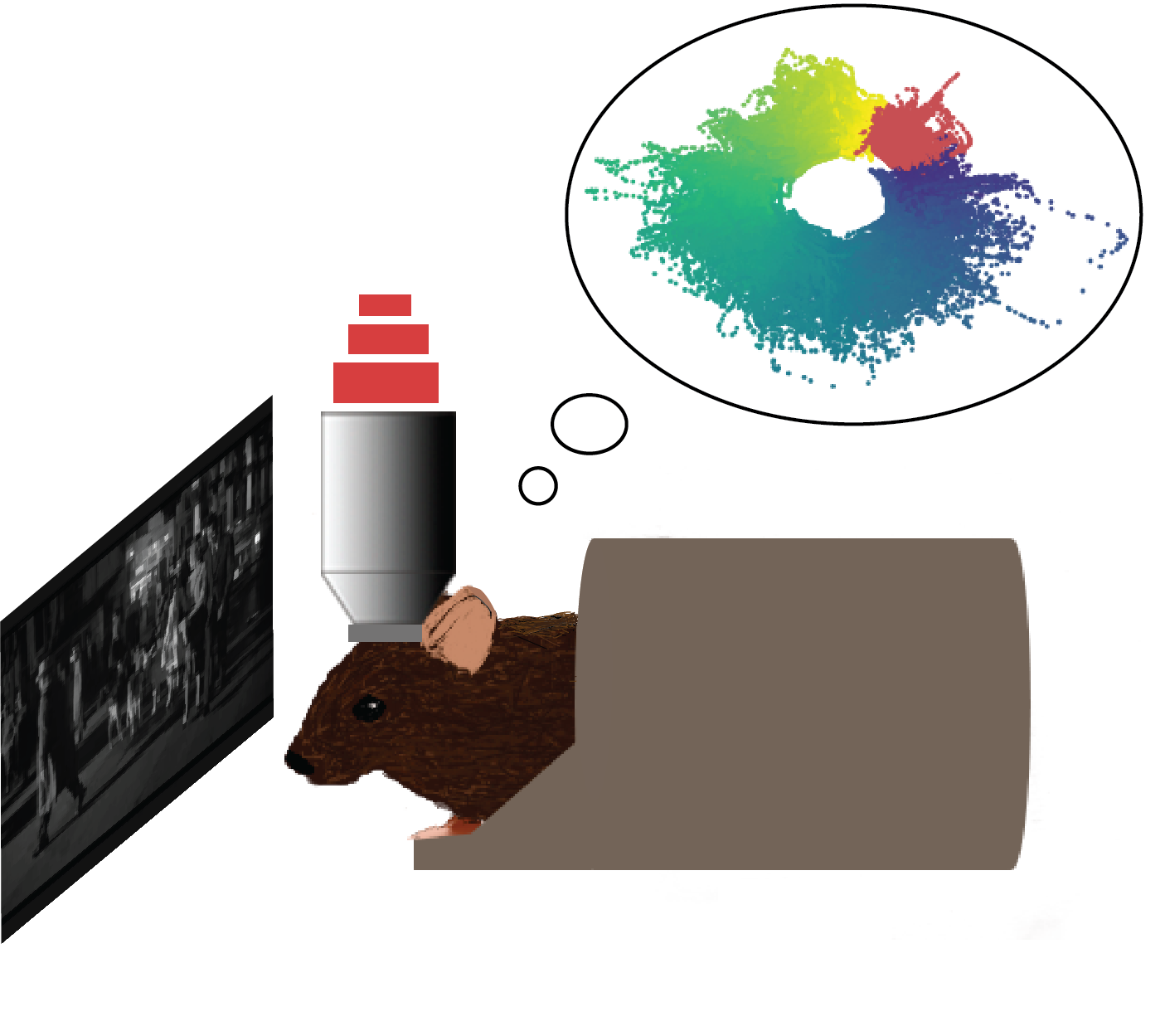

Stable representation of a naturalistic movie emerge from unstable single neuronal responses

In collaboration with Dr. Michael Goard’s lab at UC Santa Barbara, I analyzed chronic calcium imaging data from mouse primary visual cortex. We found that single neuronal responses to a repeated naturalistic movie drifted across weeks. However, by applying a dimensionality reduction method (Isomap) and an unsupervised

learning method (SPUD), we extracted a stable one-dimensional representation of time in the naturalistic movie from population activity across weeks.

Further analysis showed that the stable representation was mediated by the precise timing and coordinations between episodic activity in single neuronal responses.

Dataset | TCA | SPUD

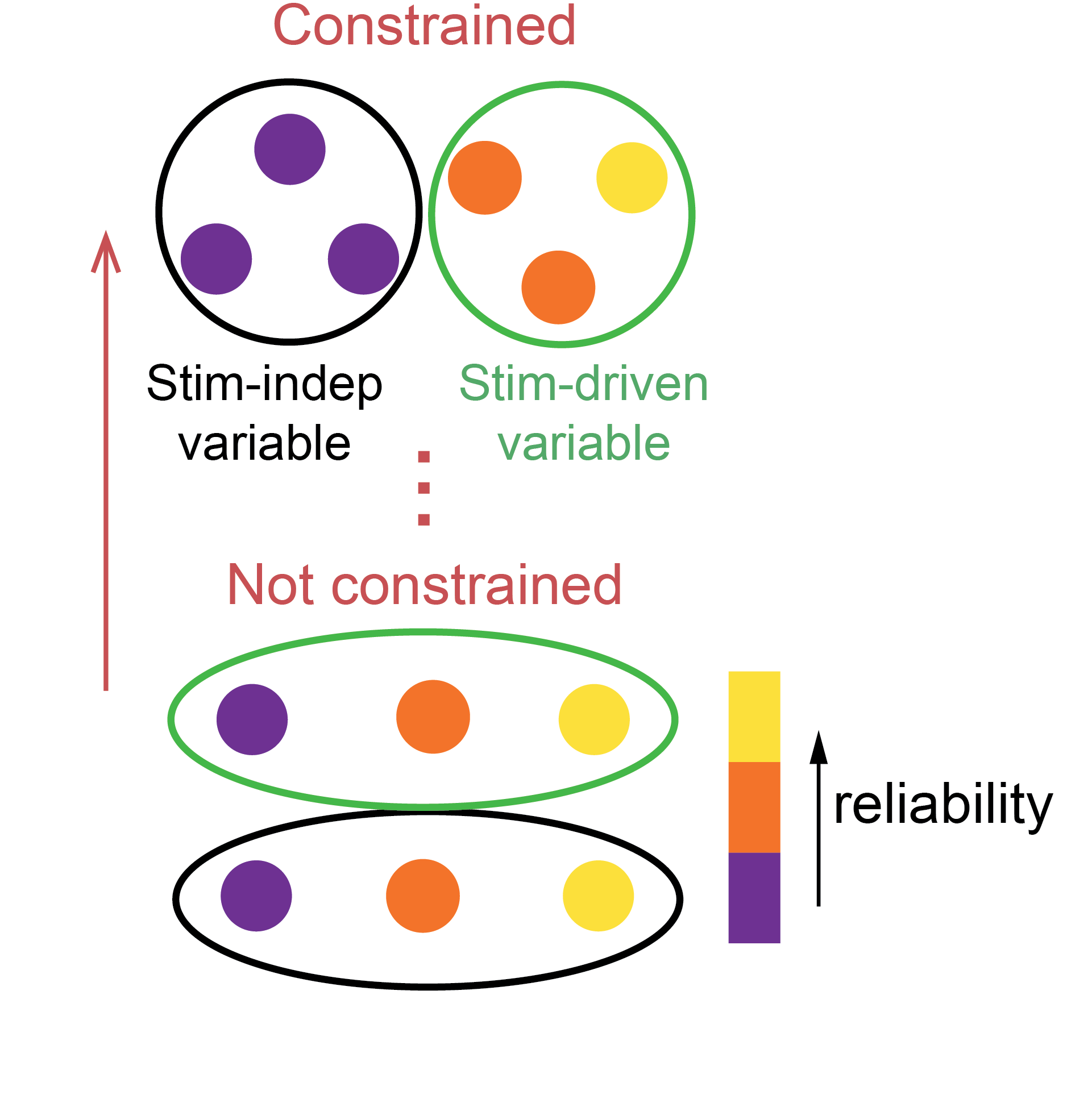

Mixed representation of stimulus-driven and -independnent variables in single neurons

Most of the neurons in visual cortex have highly variable responses from trial to trial during repeated visual stimulation. It is not clear how those neurons contribute to encoding different variables. Using a multi-way analysis method (TCA), we extracted two types of latent factors shared across neurons:

- latent factors consistent across trials

- latent factors inconsistent across trials

We found that a single neuron's response reliability imposes only a weak constraint on its encoding capabilities.